Teaching an AI to Paint Like Monet: A Deep Dive into DCGANs from Scratch

I taught a neural network to paint like Monet. A complete walkthrough of building a DCGAN from scratch, covering the math, PyTorch implementation, and training.

As a weekend project, I dove into the world of generative models by taking on a personal project I’ve been eager to try: teaching a neural network to paint in the style of the great Impressionist, Claude Monet. This post documents my journey, from analyzing Monet’s iconic style to implementing a Deep Convolutional Generative Adversarial Network (DCGAN) from scratch. You can check out the source code on GitHub.

The “Why”: Capturing Impressionism with Adversarial Nets

The challenge of artistic style transfer is not just about mimicking colors or shapes; it’s about capturing the essence of an artist’s technique, their brushstrokes, their use of light, and their compositional tendencies. Monet’s work, characterized by its soft focus, vibrant yet natural color palettes, and emphasis on light’s ephemeral qualities, presents a fascinating and complex style for an AI to learn.

While various techniques for style transfer exist, Generative Adversarial Networks (GANs) are particularly well-suited for this task. A GAN consists of two neural networks, a Generator and a Discriminator, locked in a competitive game. The Generator’s goal is to create images so realistic that they fool the Discriminator, whose job is to distinguish real images from fake ones. This adversarial process forces the Generator to learn the intricate and subtle patterns of the training data, rather than just creating a superficial copy.

I chose the DCGAN architecture specifically because its use of convolutional layers is highly effective at learning hierarchical spatial features, which is essential for understanding the composition and texture of paintings.

Understanding the Canvas: An Exploration of Monet’s Style

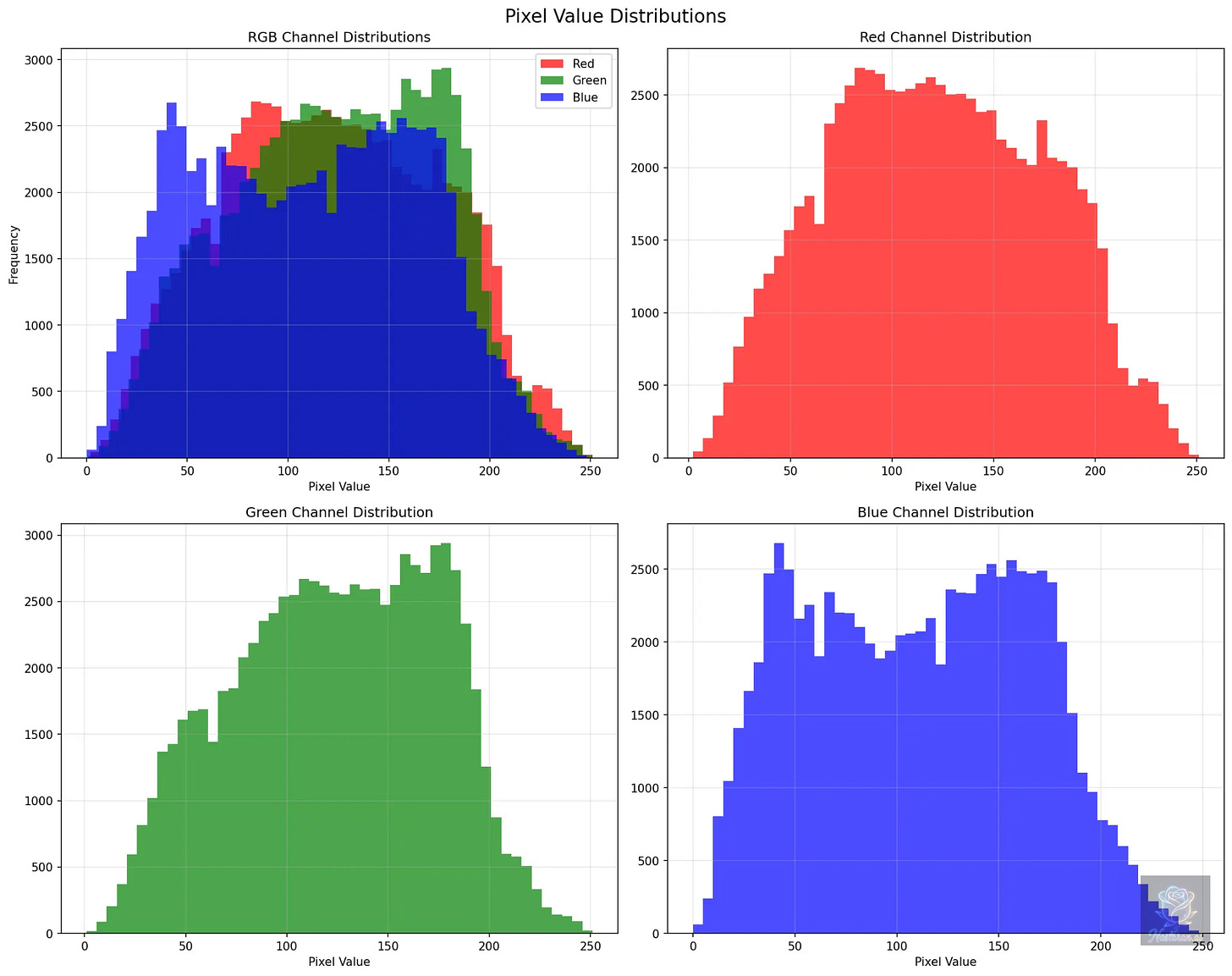

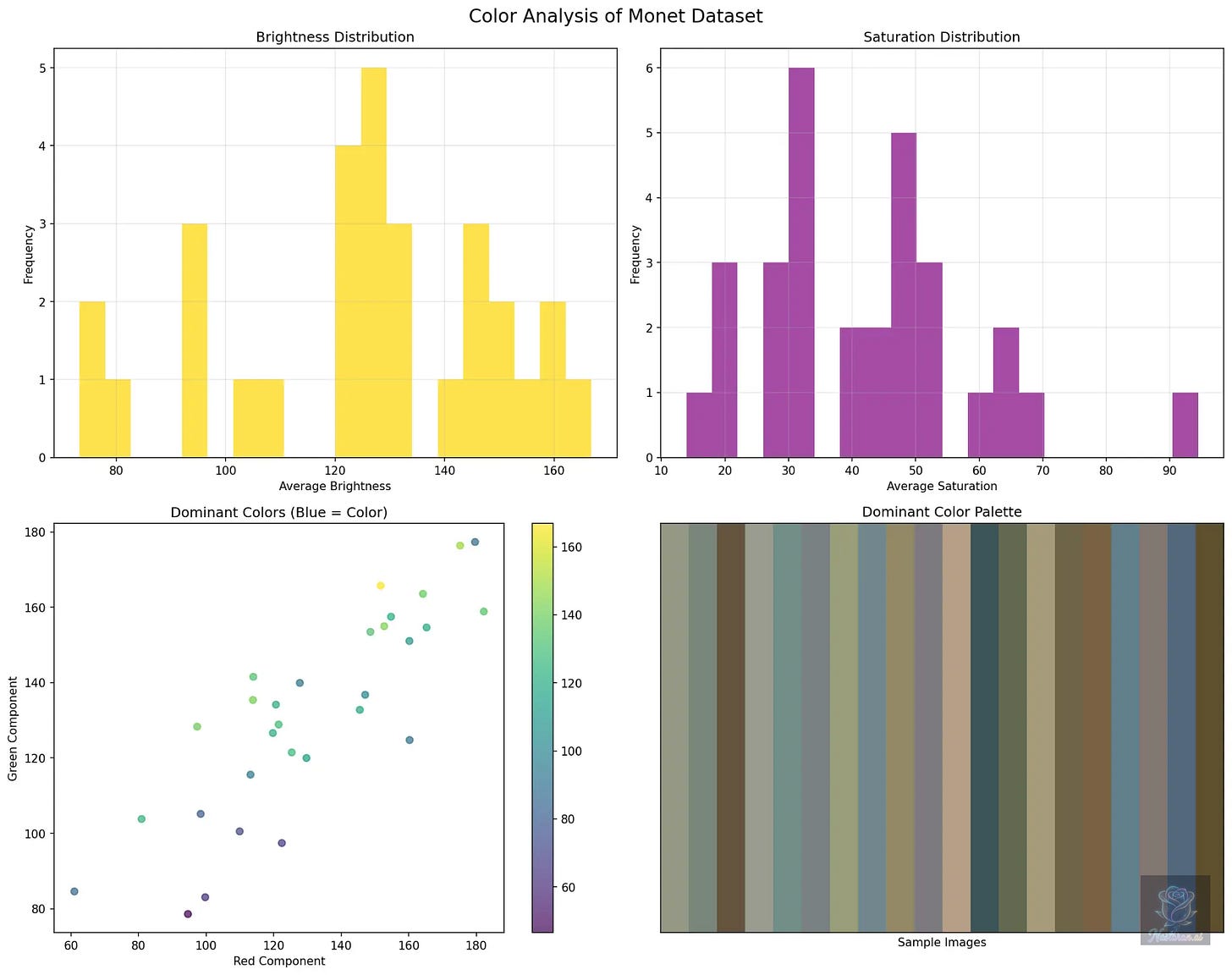

Before building the model, I first had to understand my data. The dataset consists of 300 high-quality digital reproductions of Monet’s paintings. While a small dataset, a thorough Exploratory Data Analysis (EDA) revealed a remarkable consistency in Monet’s style, which I believed a model could learn.

My analysis of the color profiles showed that Monet’s paintings have a distinct palette. The pixel distribution histograms revealed a slight bias towards warmer tones, with the blue channel showing the highest variance, likely reflecting his famous water lilies and sky scenes. The color analysis further confirmed this, showing a tendency towards mid-range brightness and a wide range of saturation, which gives his work its characteristic vibrancy.

After 5,000 epochs, the model was able to generate convincing, novel images that captured the essence of Monet’s style.

This initial analysis was crucial. It not only provided a quantitative fingerprint of Monet’s style but also informed my decisions for preprocessing the data, such as normalizing the images to a range that would be suitable for the GAN’s activation functions.

The Adversarial Dance: The Mathematics of a GAN

The competitive dynamic between the Generator (G) and the Discriminator (D) is formalized through a minimax loss function. The goal is to find a balance—a Nash equilibrium, where the Generator produces images that are indistinguishable from reality, and the Discriminator is no better than 50/50 at telling them apart. The value function, V(D, G), is expressed as:

Let’s break this down:

This term represents the Discriminator’s ability to correctly identify real images. The Discriminator’s output, D(x), is the probability that an image ‘x’ from the real data distribution is authentic. The Discriminator wants to maximize this term, driving D(x) towards 1 (real).

This term represents the Discriminator’s ability to identify fake images. The Generator creates an image G(z) from a random noise vector ‘z’. The Discriminator then evaluates this fake image. The Generator wants to fool the Discriminator, so it tries to make D(G(z)) as close to 1 as possible, which in turn minimizes this term. The Discriminator, on the other hand, wants to correctly identify the fake image, so it tries to make D(G(z)) as close to 0 as possible, which maximizes this term.

By training these two networks against each other, the Generator becomes progressively better at capturing the underlying distribution of the real data, which in this case, is the artistic style of Monet.

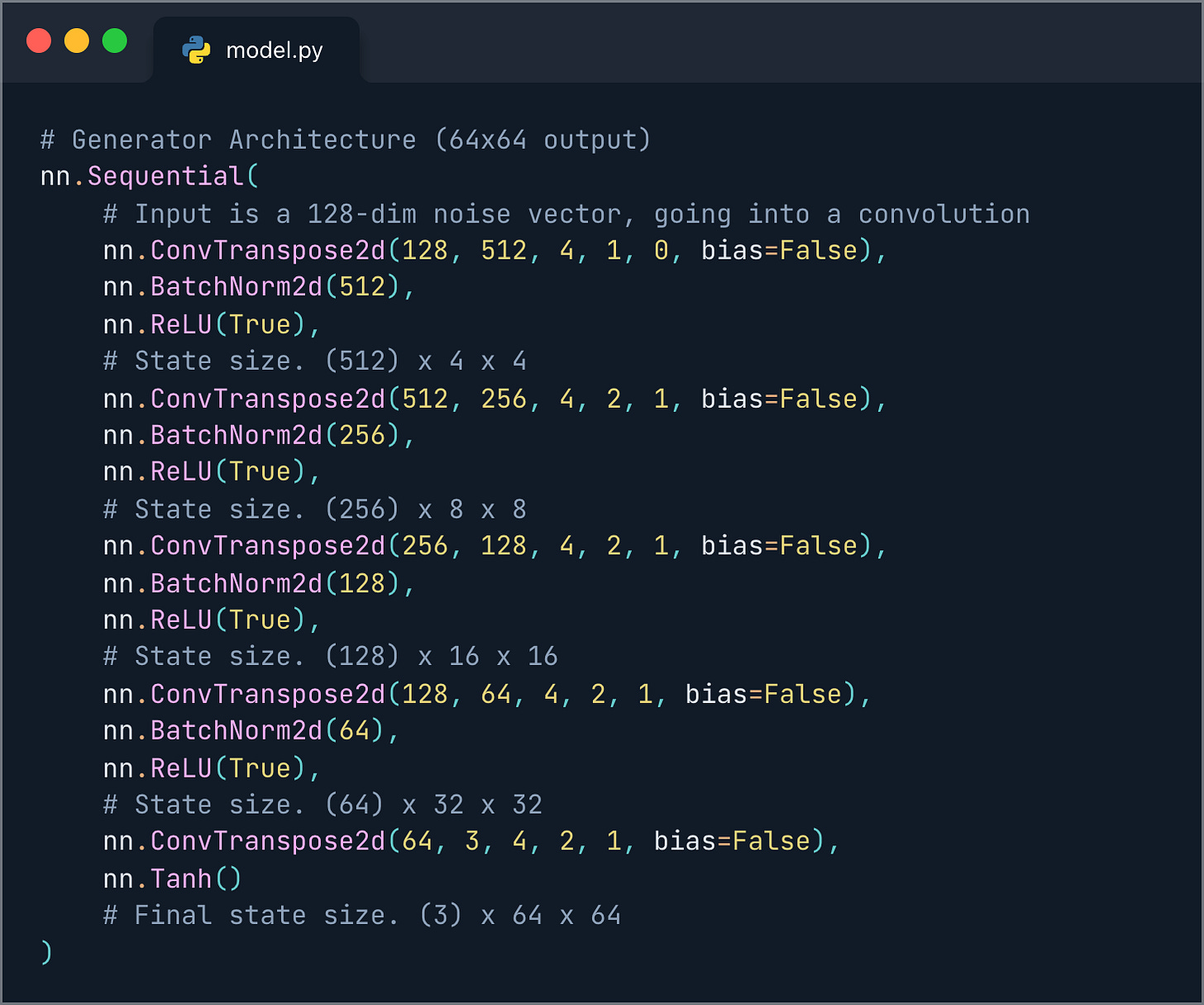

Building the Brushes: The DCGAN Architecture

Following the principles of the original DCGAN paper, I designed the Generator and Discriminator networks using PyTorch. The key is using transposed convolutions in the Generator to upsample from a random noise vector into a full image, and standard strided convolutions in the Discriminator to downsample an image into a single probability.

Here is a simplified view of the Generator’s architecture:

The architecture uses a 4x4 kernel for the convolutional layers, which is a common choice in DCGANs to learn spatial features effectively. The stride of 2 in the transposed convolutions allows the network to double the spatial dimensions at each layer, effectively upsampling the image. Batch normalization is used after each layer to stabilize training, and the final Tanh activation function squashes the output pixel values to be between -1 and 1, matching the normalization of the input data.

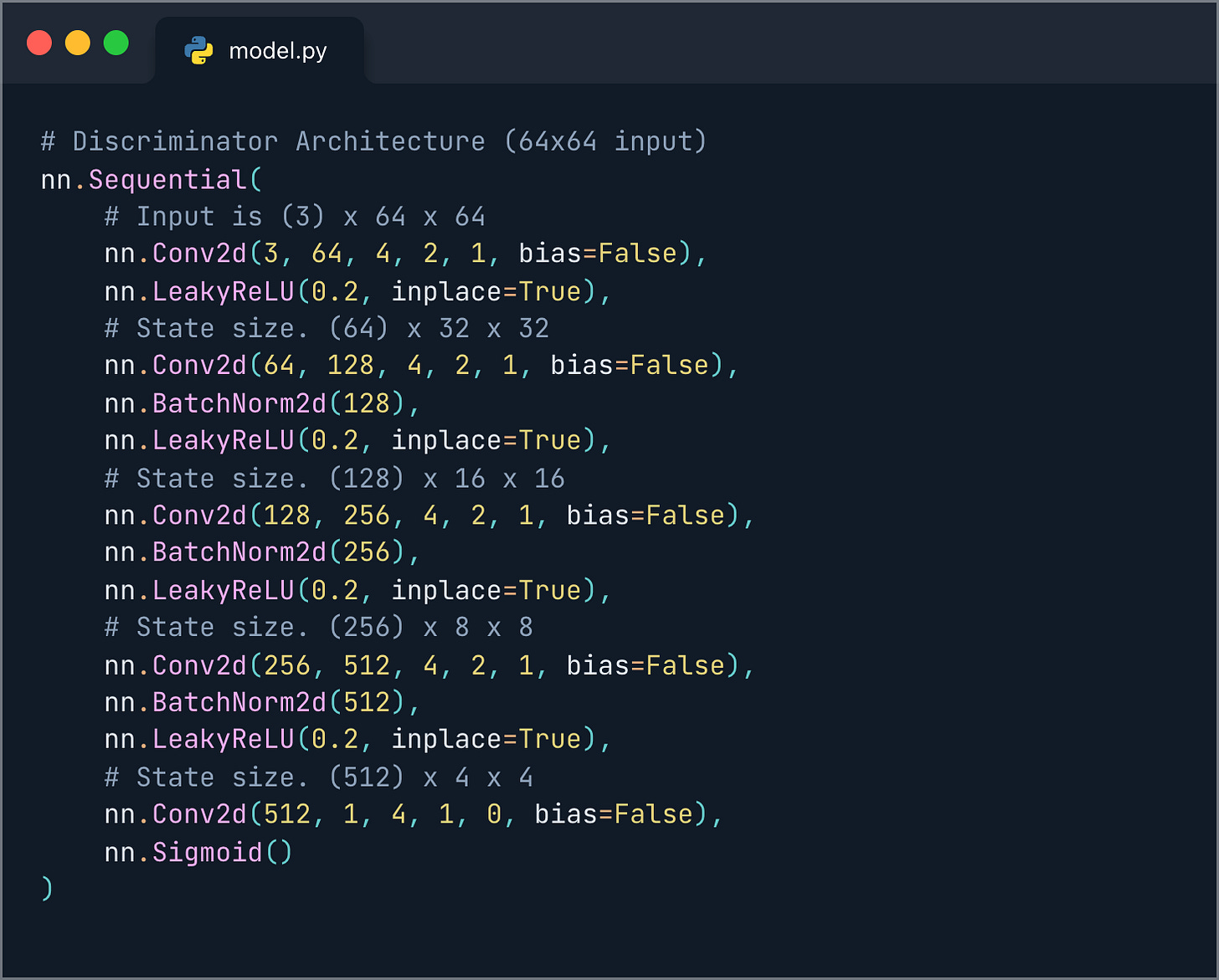

The Discriminator is essentially a mirror image of the Generator:

Here, strided convolutions downsample the image at each step. I used LeakyReLU as the activation function, which is a common practice in GANs to prevent sparse gradients, a problem that can occur with standard ReLU. The final Sigmoid activation outputs a single probability, the network’s guess as to whether the input image is real or fake.

The Artist at Work: Training

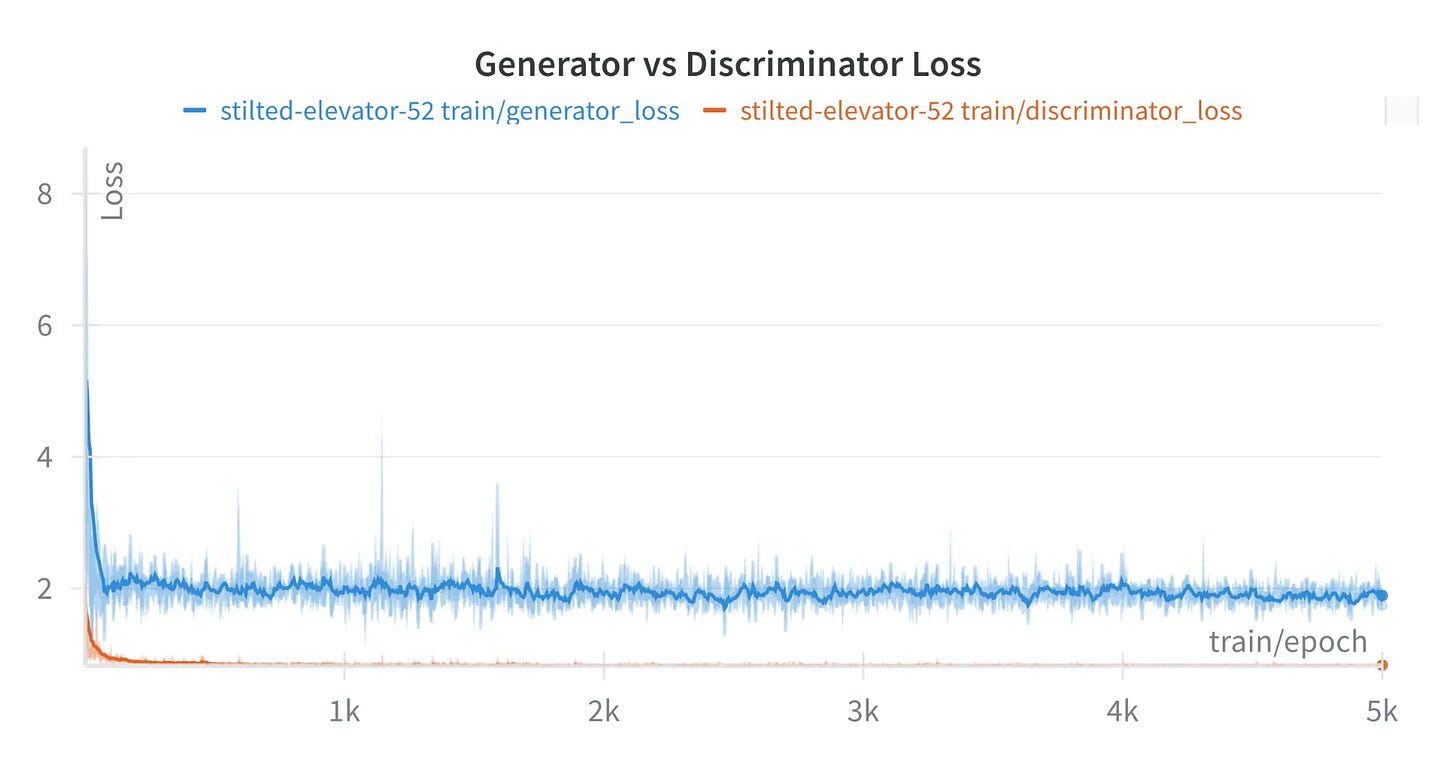

I trained the DCGAN for 5,000 epochs on my Apple Silicon-based machine. The entire process took just over an hour, which is a testament to the efficiency of the M-series chips for ML workloads. Throughout the training, I used Weights & Biases (WandB) to log the loss metrics and visualize the generated images at different stages. This was invaluable for monitoring the training dynamics.

The loss curves show the characteristic oscillatory behavior of a healthy GAN training process. Neither network overpowers the other for too long, indicating a stable learning environment. It was fascinating to watch the generated images evolve from pure noise into something that started to resemble a coherent, Monet-like scene.

Results and Reflections

After 5,000 epochs, the model was able to generate convincing, novel images that captured the essence of Monet’s style. The generated images exhibit the soft textures, impressionistic light, and characteristic color palettes found in his work.

Here is a sample of the generated images at different points in the training process:

Epoch 500

Epoch 5000

This project was a rewarding experience that deepened my understanding of GANs and the practical nuances of training them. It was a powerful reminder that behind the complex mathematics and code, the goal of generative AI is to create, to learn, and, in this case, to even appreciate art in a new way. The full code and a more detailed technical report are available on my GitHub repository.

I hope this post was insightful, and I welcome any questions or discussions. If you’re working on generative models or exploring computer vision, I’d be happy to connect and exchange ideas.

References

[1] Radford, A., Metz, L., & Chintala, S. (2015). Unsupervised Representation Learning with Deep Convolutional Generative Adversarial Networks. arXiv preprint arXiv:1511.06434.

[2] Goodfellow, I. J., Pouget-Abadie, J., Mirza, M., Xu, B., Warde-Farley, D., Ozair, S., … & Bengio, Y. (2014). Generative adversarial nets. In Advances in neural information processing systems (pp. 2672-2680).

[3] I’m Something of a Painter Myself. (2021). Kaggle. Retrieved from https://www.kaggle.com/competitions/gan-getting-started